At 4:03 AM on April 3, Google DeepMind officially launched the new open-source model series

Comprehensive Specifications: The “Four Knights” from Mobile to Workstations

31B Dense (Flagship Edition): 31 billion fully activated parameters, supporting ultra-long context of 256K. It ranks third on the Arena AI open-source leaderboard. The unquantized version can run with just one H100.

26B A4B MoE (Cost-Effective King): Uses a mixture-of-experts architecture, with a total of 25.2 billion parameters, and only 3.8 billion activated parameters. Its reasoning speed is close to that of a 4B model, but its quality far exceeds similar products, ranking sixth on the leaderboard.

E4B & E2B (Edge Elite): Optimized for mobile phones and embedded devices. Using Per-Layer Embeddings technology, the effective parameters are compressed to 4.5 billion and 2.3 billion respectively. Among them, E2B's memory usage can be reduced to below 1.5GB on some devices.

Performance Boost: Code and Math Capabilities Achieve Generational Leap

Compared to the previous generation Gemma327B, the core metrics of

Math Competitions: AIME2026 test results jumped from 20.8% to 89.2%.

Programming Evolution: Codeforces ELO rating increased from 110 to 2150, and in LiveCodeBench testing, it rose from 29.1% to 80.0%, becoming one of the most usable open-source programming assistance models currently available.

Comprehensive Reasoning: Graduate-level science question answering (GPQA Diamond) score nearly doubled from 42.4% to 84.3%.

Multilingual Ability: Native support for over 140 languages, with an MMMLU score of 88.4%.

Core Features: Built-in “Thinking Mode” and Agent Genes

Thinking Mode: Includes an internal thinking mode that allows the model to perform internal reasoning before outputting answers, greatly improving accuracy for multi-step planning tasks.

Native Agent Support: Supports function calls and structured JSON output. Google simultaneously released an open-source Agent Development Kit (ADK), enabling edge-side models to become “intelligent agents.”

Deep Multimodal: All versions support image and video input. Even small model versions come with an additional audio encoder, supporting speech recognition and translation.

Industry Insights: “Power Reorganization” in the Open Source Field

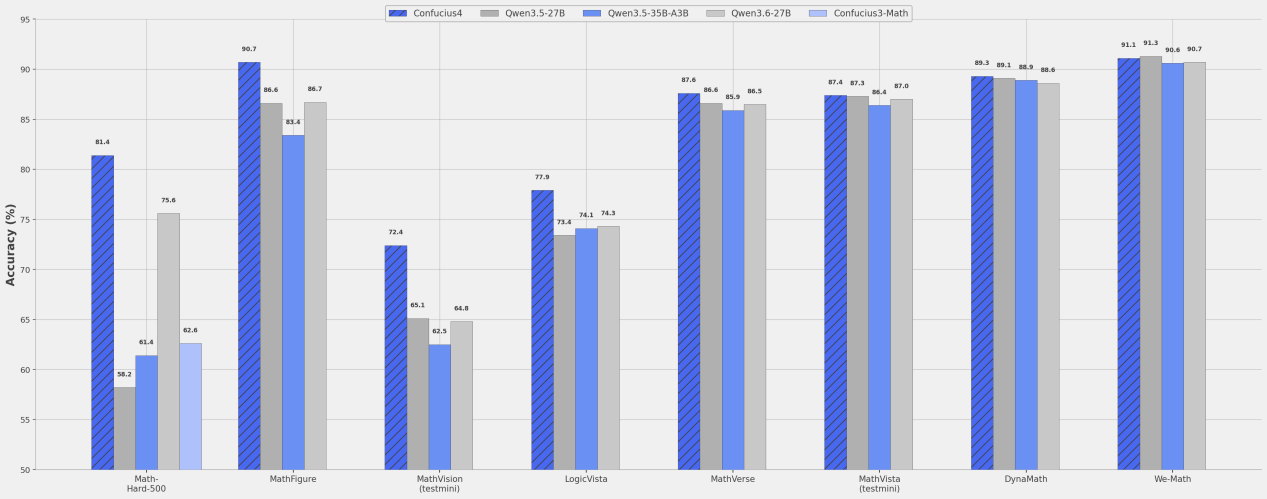

Over the past year, domestic open-source models (such as DeepSeek, Qwen, GLM, etc.) have evolved rapidly, and Google's influence in the open-source field was once weakened. The release of

Conclusion: When Big Tech Starts Talking About “Sincerity”